Computer Technology and Voting

Jeremy Epstein, Senior Computer Scientist, SRI International

Technology in the form of tabulators has been used for tallying votes for about 100 years. ([1] provides a detailed history of tabulators and computers in voting and elections.) Starting in the late 1970s, Roy Saltman from the National Bureau of Standards (now the National Institute of Science & Technology) wrote several reports pointing out risks in use of computers in elections [2][3]. Other far-sighted individuals pointed out the risks, including Peter Neumann in the first issue of the RISKS Forum [4], who quoted a New York Times article on problems with software in vote tabulating systems [5] and wrote “This topic is just warming up”. A later New York Times article [6] noted “In Indiana and West Virginia, charges were made that company officials and the local voting authorities altered vote totals of several races, including those for two House seats, by secretly manipulating the computers. In addition, computer consultants hired by the plaintiffs in three states and two independent experts working for The New York Times have examined the programs used in Indiana and West Virginia. All five concluded separately that the computer programs were poorly written and highly vulnerable to secret manipulation or fraud.”

First generations of Direct Recording Electronic (DRE) computer systems introduced in this time period used simple computers and pushbuttons for voters to record their votes, thus going beyond the tabulation heretofore in use. Examination of the first generation DRE systems before use was cursory, as they were treated largely as identical to the lever-based mechanical voting systems they replaced. Computer scientists and security experts generally were not engaged in the debate. Rebecca Mercuri’s dissertation [7], with its defense just one week before the 2000 US Federal election, pointed out the risks of computerized vote counting, and proposed the “Mercuri Method” for counting ballots on electronic voting systems – suggesting that on electronic voting systems, voters be shown a printed summary of their vote before casting, to be used in case of recount and to guarantee against accidental or intentional software bugs that lead to miscounted votes. While limitations in her approach have since become clear, her dissertation marks the beginning of computer scientists paying attention to the issue.

However, little attention was paid to the risks until after the 2000 Federal election, which introduced the terms “butterfly ballots” and “hanging chads” to the American lexicon, and raised awareness of voting system accuracy. The Help America Vote Act [HAVA] of 2002 launched America towards purchasing second generation DRE voting systems everywhere, which generally used touchscreens for voters to select their votes, and offered the promise of making private voting available to voters with disabilities, as well as offering the patina of modernization.

The story of the past decade is one of (mis)use of technology. HAVA encouraged states to use Federal funds to purchase DREs, but without first establishing standards for reliability, security, usability, or accessibility. While those standards were being developed, academics and advocates were analyzing systems. In 2003, an academic group analyzed the leaked source code for the Diebold AccuVote DRE [8], and by doing so showed the security risks in DREs, and set off a fierce battle that raged through the next decade. The California Top To Bottom Review [9] conclusively established the insecurity of DREs, led directly to the decertification of most DREs in California, and set in motion the national move towards optical scan voting. Today, while efforts to pass Federal laws to move away from DREs have failed, the change is underway at the state level – not only because of security concerns, but also because optical scan systems result in shorter lines on election day (since extra pencils for more voters are far cheaper than additional machines). (Some states are lagging behind, notably Maryland and Georgia, but most other states have either changed over or are in the process of changing.) However, optical scan technology for voting is not perfect – despite generations of taking SAT and other standardized tests, many voters have difficulty understanding how to mark an optical scan ballot by coloring in the circles. This has increased the voting gap for poorly educated, low income, and elderly voters, who are less likely to have experienced optical scan tests in their early years, and hence more likely for their votes to be mismarked resulting in disenfranchisement.

Academic research into alternate methods for voting has yielded surprising results. A summary screen of selected candidates does not help voters detect errors; an experiment showed that even when the voting system deliberately changed the results before the summary screen, most voters did not notice [10][11]. Usability plays a critical role in voter actions; an accidental experiment in Florida indicates that even color bars and placement of contests on screens can cause many voters to miss contests [12][13]. And giving voters the opportunity to vote on contests in any order also increases the number of races missed [14]. Voters like DREs, but don’t trust their accuracy. In short, the social angle on voting is critical to helping voters cast their votes as intended.

The changes in voting equipment have not happened in a vacuum, though. While security has been a primary driver, other concerns have included accessibility to voters with disabilities, and support for voters using languages other than English. ([1] provides extensive information on voting for voters with disabilities.) The first and second generations of DREs included limited support for voters with disabilities – most notably audio ballots. However, these systems were developed without significant consultation with disability specialists, and were effectively unusable by their intended audiences. For example, audio ballots for voters with limited vision rely on complex navigation schemes, and typically take 10 times longer to use for those voters than for voters without disabilities using a visual display. And even the mechanical aspects of DREs limit their usefulness: some are designed so that a wheelchair cannot get close enough to be usable by the voter; others cannot be at an appropriate angle to be voted in a wheelchair. Such social aspects of the voting experience are outside the normal scope of computer science, but must be addressed as part of solving the “computer” problem.

Other drivers have included making the lives of poll workers and election officials less stressful – and recognizing the critical roles they play in accurate and secure elections. Computer scientists habitually write manuals that describe how to operate software, but only in recent years do we see increased understanding that not only don’t users read manuals, but they will take shortcuts to make their lives easier, even if those shortcuts impede the proper operation of the system.

Meanwhile, the ubiquity of the internet has pushed another headlong rush into internet voting, again without considering the implications. Despite the assumption that internet voting would increase turnout, especially by young people, studies so far indicate that internet voting has no effect on overall turnout, simply reducing in-person voting by an equivalent number of voters. And surprisingly, internet voting is most popular among middle-aged voters, with younger voters preferring the social aspect of going to the polls in person.

Internet voting unquestionably increases security risks, since now elections can be attacked by anyone anywhere in the world. Perhaps less obviously, it also exacerbates the social issues in voting. For example, voter coercion, which is limited (although certainly not eliminated) by in-person voting, becomes much easier. As noted in a recent comment on a British Columbia site about their proposed use of internet voting [15], “Internet voting will, in effect, transfer the vote of many women and of some adults who live with a dominant spouse, parent or care giver. I have often heard women say that they vote differently than their husband or father thinks they do. […] Sometimes they voted contrary to the signs in their front yard.” Technology, in this case, can work against the social good of giving each person his/her secret vote.

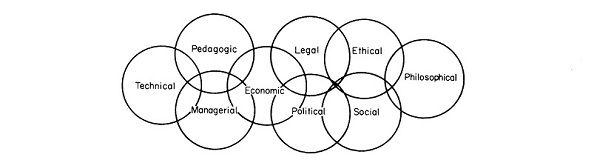

What will the next forty years bring in voting? Some in younger generations have suggested a move to direct democracy (where the public directly votes on issues), rather than representative democracy. However, the subtlety of issues and need for compromise is unlikely to have happy results in a direct democracy, and technology is unlikely to be the answer. In the meantime, computer scientists will need to work more closely with psychologists, political scientists, usability and accessibility experts, and election experts to design systems that guarantee security, privacy, accuracy, and accessibility to all voters in modern democracies.

[1] “Broken Ballots: Will Your Vote Count” Douglas Jones and Barbara Simons, 2012.

[2] NSBIR 75-687, “Effective Use of Computing Technology in Vote-Tallying” Roy Saltman, National Bureau of Standards, March 1975.

[3] NBS Special Publication 500-158, “Accuracy, Integrity, and Security in Computerized Vote-Tallying”, Roy Saltman, National Bureau of Standards, August 1988.

[4] RISKS Forum, volume 1, number 1. http://catless.ncl.ac.uk/Risks/1.01.html

[5] “Voting By Computer Requires Standards, A U.S. Official Says”, David Burnham, New York Times, July 30, 1985

[6] “California Official Investigating Computer Voting System Security”, David Burnham, New York Times, December 18, 1985 http://www.nytimes.com/1985/07/30/us/voting-by-computer-requires-standards-a-us-official-says.html http://www.nytimes.com/1985/12/18/us/california-official-investigating-computer-voting-system-security.html

[7] Rebecca Mercuri, “Electronic Vote Tabulation: Checks & Balances”, University of Pennsylvania, 2000.

[8] “Analysis of an Electronic Voting System”, Tadayoshi Kohno, Adam Stubblefield, Aviel D. Rubin, Dan S. Wallach, IEEE Symposium on Security and Privacy 2004. (First appeared as Johns Hopkins University Information Security Institute Technical Report TR-2003-19, July 23, 2003.)

[9] Top To Bottom Review, California Secretary of State, August 2007. http://www.sos.ca.gov/voting-systems/oversight/top-to-bottom-review.htm

[10] “Do Voters Really Fail to Detect Changes to their Ballots? An Investigation of Voter Error Detection”, Acemyan, C.Z., Kortum, P. & Payne, D., Proceedings of the Human Factors and Ergonomics Society, Santa Monica, CA: Human Factors and Ergonomics Society.

[11] “The Usability of Electronic Voting Machines and How Votes Can Be Changed Without Detection”, Sarah P. Everett, PhD dissertation, Rice University, May 2007.

[12] “Ballot Formats, Touchscreens, and Undervotes: A Study of the 2006 Midterm Elections in Florida”, Laurin Frisina, Michael C. Herron, James Honaker, Jeffrey B. Lewis, Election Law Journal.

[13] “Florida’s District 13 Election in 2006: Can Statistics Tell Us Who Won?”, Arlene Ash and John Lamperti

[14] “How to build an undervoting machine: Lessons from an alternative ballot design”, Greene, K. K., Byrne, M. D., & Goggin, S. N., Journal of Election Technology and Systems, 2013.

[15] From comments on the report of the Independent Panel on Internet Voting, October 2013. http://internetvotingpanel.ca/

A highly informative article –with commendable brevity and citations